- Reportage

Source: Nikkei Business Online (in Japanese)

This article is part of a Nikkei Business Online interview series titled “The Emergence and Potential of the Sei-katsu-sha Interface Market”

Cutting-edge technologies like 5G and IoT have digitalized all manner of things, creating interfaces that enable sei-katsu-sha, businesses and objects to communicate directly with each other. These interfaces are being developed into new services. Hakuhodo has named this the “Sei-katsu-sha Interface Market.” Aiming to become a key player in this market, the company is in the midst of developing a variety of technologies. Among these is AR Cloud technology, which they are creating together with New York City Media Lab (NYC Media Lab). Here, NYC Media Lab Executive Director Justin Hendrix, and Masato Aoki, General Manager of the R&D Division at Hakuhodo, explain the collaboration and media potential of AR Cloud technology.

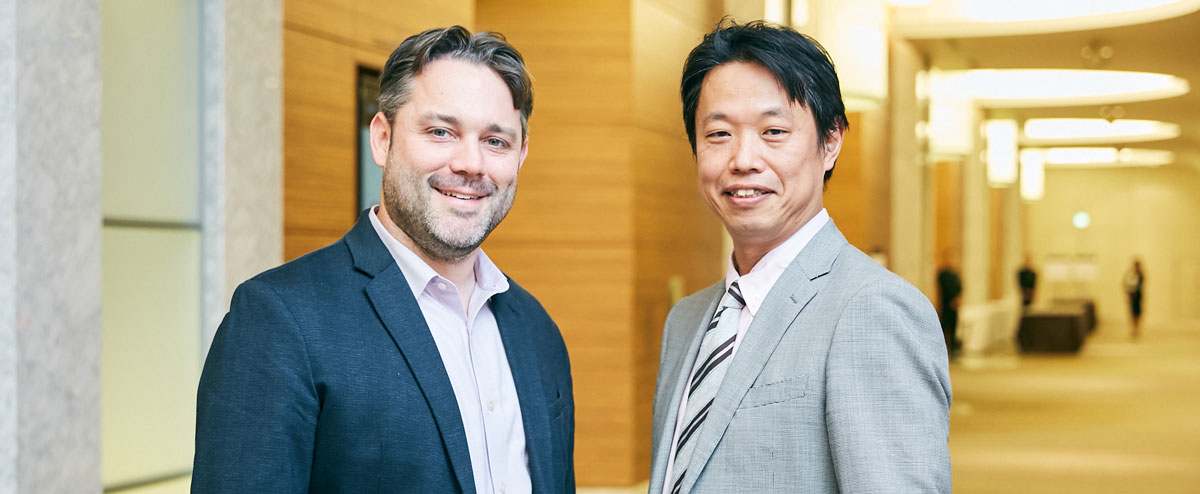

Justin Hendrix, Executive Director of New York City Media Lab and Masato Aoki, General Manager of Hakuhodo’s R&D Division

Justin Hendrix, Executive Director of New York City Media Lab and Masato Aoki, General Manager of Hakuhodo’s R&D DivisionWhat is the New York City Media Lab, which Hakuhodo is a member of?

AOKI: Developing next generation interface technology is essential to generating innovation. We at Hakuhodo believe that rather than just working on technology development ourselves, it is essential to partner with a variety of technology experts and construct an ecosystem where business, academia, and government work together. Hakuhodo partnered with NYC Media Lab in early 2019 to research AR Cloud technology together. Mr. Hendrix, can you explain why NYC Media Lab was built and tell us about its activities?

HENDRIX: NYC Media Lab was established as a public-private partnership in 2010 by New York City, which was striving to become a city where the latest in emerging technology—including VR/AR—comes together. Focusing on various disciplines, including engineering, computer science, design and many others related to emerging media technology, we have become a hub connecting industry, academia and government. Our university partners include Columbia University, New York University, The New School, the Pratt Institute, the School of Visual Arts, and The City University of New York (CUNY). Hakuhodo is now one of our member companies, along with other ad agencies and a variety of media companies, publishers, mobile operators, and technology companies. We run prototyping and R&D projects to support innovation, support start-up companies out of the universities, and organize events for business executives and to help form communities, among other activities.

Additionally, we’ve started a new facility specialized in AR/VR and spatial computing called RLab in Brooklyn’s Navy Yard. There, various startups gather and conduct research into new interfaces and build new applications. It is a hub for the cutting-edge tech industry in New York. We invite executives from partner companies to discuss what needs to be done to create new industries utilizing the latest technology. We also invited a team from Hakuhodo. Sharing information with universities, start-ups and other members of the NYC community as we pursue our research is also something we do.

Justin Hendrix, Executive Director, New York City Media Lab

Justin Hendrix, Executive Director, New York City Media LabMedia to become a part of the environment with VR/AR

AOKI: Hakuhodo believes that we are about to see the advent of the Sei-katsu-sha Interface Market and it seems that NYC Media Lab’s predictions for 2030 include a similar concept. Mr. Hendrix, how do you see the media changing toward 2030?

HENDRIX: We will likely see a number of innovations and changes by 2030. Firstly, the meaning of the things the media put out will change significantly. Until now, media has been like a mirror reflecting things that exist in reality. But in the future, the media will also computationally generate and project things that do not exist in the physical world.

There will also be innovation in interfaces. The last 20 years of media innovation has largely been on two-dimensional screens. But future interfaces will include voice and VR/AR devices that will allow media to move into the environment and become a part of the world around us. With advances in natural language processing, computer vision, machine learning and other AI technologies, our information transactions with media will change. Also, once synthetic media or computationally generated media become common in the next few years, we will be able to automate the creation of word-, audio- and video-based content, and human creativity will play a major role in giving power to those systems and what they can produce. And when computationally generated media comes together with personal data, new benefits will emerge.

The Hakuhodo Team, NYC Media Lab and Professor Steven Feiner at Columbia University are currently jointly researching how to synchronize fluctuating reality and virtual reality information under the theme “AR interaction that takes into consideration reality.” These new capture technologies will likely bring forth new types of media experience as well. AI will be able to automatically generate content, characters, and avatars, and these will likely come to be used in marketing, too.

We need to think about how to design communication for this new age, including the sharing and protection of personal information, how to provide content to consumers, and what kind of standards need to be set for the use of these technologies.

Constructing an industry-academia-government ecosystem to bring technologies together

AOKI: When media becomes a part of our environment as Mr. Hendrix mentioned, new interfaces in the cyber-physical spaces where physical space and cyber space are closely blended will need to be designed, as well as new methods of communication in that space. One of our vectors for diving into that area was joining NYC Media Lab to research AR Cloud technology.

Masato Aoki, General Manager, R&D Division, Hakuhodo Inc. and

Masato Aoki, General Manager, R&D Division, Hakuhodo Inc. andGeneral Manager, Marketing Technology Development Division, Hakuhodo DY Holdings Inc.

AR Cloud is a technology that pushes current augmented reality technology even further to achieve real-time experience sharing and high-level recognition of physical spaces. The former is a technology that allows multiple users to communicate and collaborate, and the latter is a technology that captures complex physical spaces with high precision.

If these are realized, we will be able to create a digital copy of the real world, upload related data onto the cloud, and enable multiple users to share the same experience at the same time. For example, if you wore smart glasses as you walked around town, reality would become a form of media, with information on restaurants or vacant rooms in apartment blocks and the like displayed for you to see, turning all physical spaces into media.

If our joint research with Professor Steven Feiner of Columbia University that Mr. Hendrix mentioned is realized, we will be able to enjoy new three-dimensional sensory experiences, for instance TV commercials where balls come out of the screen in your living room and you throw or kick them back.

HENDRIX: How should we interact with information that only exists in virtual space? What sorts of gestures would be the best to control such information? There are countless areas that we need to look at from various perspectives, such as user experience and product design. But the future we are talking about now will come soon enough.

AOKI: The ecosystem you’ve created at NYC Media Lab involving industry, academia and government was a major takeaway for us at Hakuhodo. With the advent of the Sei-katsu-sha Interface Market, it will be difficult for one company or one technology to create significant value for people. We are in an age where we need to build ecosystems through which we can improve the quality of our technologies by way of collaborations with universities, create places together with governments to implement these technologies, and connect different technologies to each other. I would like to bring this concept back to Japan and maybe form a “Tokyo City Media Lab,” hopefully with the cooperation of you and NYC Media Lab.

HENDRIX: That’s a fantastic idea. I’d like to see NYC Media Lab and a TC Media Lab become hubs that bring value to society and consumers through technology. Let’s work together to make it happen.

End article

Read Article at: https://special.nikkeibp.co.jp/atclh/ONB/20/hakuhodo01/02/ (in Japanese)